EU AI Act Compliance Made Simple — Classify, Assess, and Prove Compliance in One Platform

- Covers all EU AI Act risk categories

- Used in 47+ countries

- Backed by GAICC

What Is the EU AI Act?

The EU AI Act represents the world’s first comprehensive legal framework for artificial intelligence. Adopted by the European Parliament in March 2024 and entered into force on August 1, 2024, this landmark regulation is reshaping how organizations develop, deploy, and govern AI systems globally.

Who does it apply to? The EU AI Act applies to ANY organization deploying or providing AI systems that affect people in the EU — regardless of where your company is headquartered. If your AI system outputs are used by people in the European Union, you must comply with the EU AI Act.

What’s the core principle? The regulation adopts a risk-based approach: different obligations apply depending on the risk level of the AI system. This means compliance is proportional and targeted, protecting high-impact systems with stricter requirements while maintaining innovation flexibility for lower-risk systems.

Who must comply? The law covers providers, deployers, importers, and distributors of AI systems. Even if you’re just using an AI system developed elsewhere, you may have regulatory obligations as a deployer.

What are the penalties? Non-compliance carries significant consequences: up to €35 million or 7% of global annual turnover (whichever is higher) for prohibited AI practices, and up to €15 million or 3% of turnover for high-risk AI system violations. These penalties apply globally, making compliance essential for any organization of scale.

EU AI Act Risk Classification — Where Do Your AI Systems Fall?

BANNED

Unacceptable Risk

Examples of prohibited practices:

- Social scoring by government authorities

- Real-time biometric identification in public spaces (with limited law enforcement exceptions)

- Subliminal manipulation designed to distort behavior

- Exploitation of vulnerabilities in specific groups (children, elderly, disabled)

- Emotion recognition in workplaces and educational institutions

- Mass surveillance practices

Enforcement Deadline: February 2, 2025 — ALREADY IN EFFECT

HIGH RISK

High-Risk AI Systems

Key areas (EU AI Act Annex III):

- Biometric identification and categorization

- Critical infrastructure management

- Education and vocational training (access determination)

- Employment (recruitment, promotion, termination decisions)

- Law enforcement and criminal justice

- Migration, asylum, and border control

- Administration of justice

- Requirements: Risk management system, data governance, technical documentation, human oversight, performance monitoring, record-keeping, transparency, and user notification.

Enforcement Deadline: August 2, 2026 (16 months away)

LIMITED RISK

Limited-Risk AI Systems

Examples:

- Chatbots and conversational AI (must disclose AI nature)

- Emotion recognition systems

- Biometric categorization (age, gender, etc.)

- AI-generated content and deepfakes

- Content recommendation systems

- Requirements: Transparency and disclosure obligations only. You must inform users they're interacting with AI.

Enforcement Deadline: August 2, 2026

MINIMAL

Minimal-Risk AI Systems

Examples:

- AI-powered video games and entertainment

- Spam filters and email classification

- Inventory and supply chain optimization

- Recommendation engines (non-critical contexts)

- General productivity tools

- Recommendation: While not legally required, voluntary compliance codes of conduct are encouraged to demonstrate responsible AI practices.

Compliance Level: Minimal legal requirements

EU AI Act Compliance Timeline — Key Dates You Cannot Miss

The EU AI Act’s compliance timeline spans multiple phases. Missing these deadlines carries significant penalties.

The regulation officially becomes law across all EU member states. Providers and deployers should begin preparation.

CRITICAL DEADLINE. All unacceptable risk AI systems must be removed from use. This deadline has already arrived — organizations must ensure compliance immediately.

General Purpose AI (GPAI) model obligations become enforceable. Organizations must establish AI governance structures and policies. This is your last chance to build governance foundations.

MAJOR ENFORCEMENT DEADLINE. All high-risk AI systems must be fully compliant. This includes completed conformity assessments, risk management systems, technical documentation, and registration in the EU database. This is 16 months away.

Full obligations for high-risk AI systems that are safety components of other regulated products (e.g., AI in medical devices, industrial machinery).

TIME TO ACT: Organizations deploying high-risk AI systems have approximately 16 months (until August 2, 2026) to achieve full compliance. Every week counts. Starting your compliance journey today is non-negotiable.

EU AI Act Penalties — The Cost of Non-Compliance

Prohibited AI Practices

€35M or 7%

High-Risk Non-Compliance

€15M or 3%

Procedural Violations

€7.5M or 1%

Penalties Apply Worldwide:

These penalties apply to your entire global revenue, not just EU operations. For a company with €500M in revenue, a 7% penalty equals €35M — a material business impact. The EU is serious about enforcement, and regulators have already begun investigations.

How Govern365 Makes EU AI Act Compliance Achievable

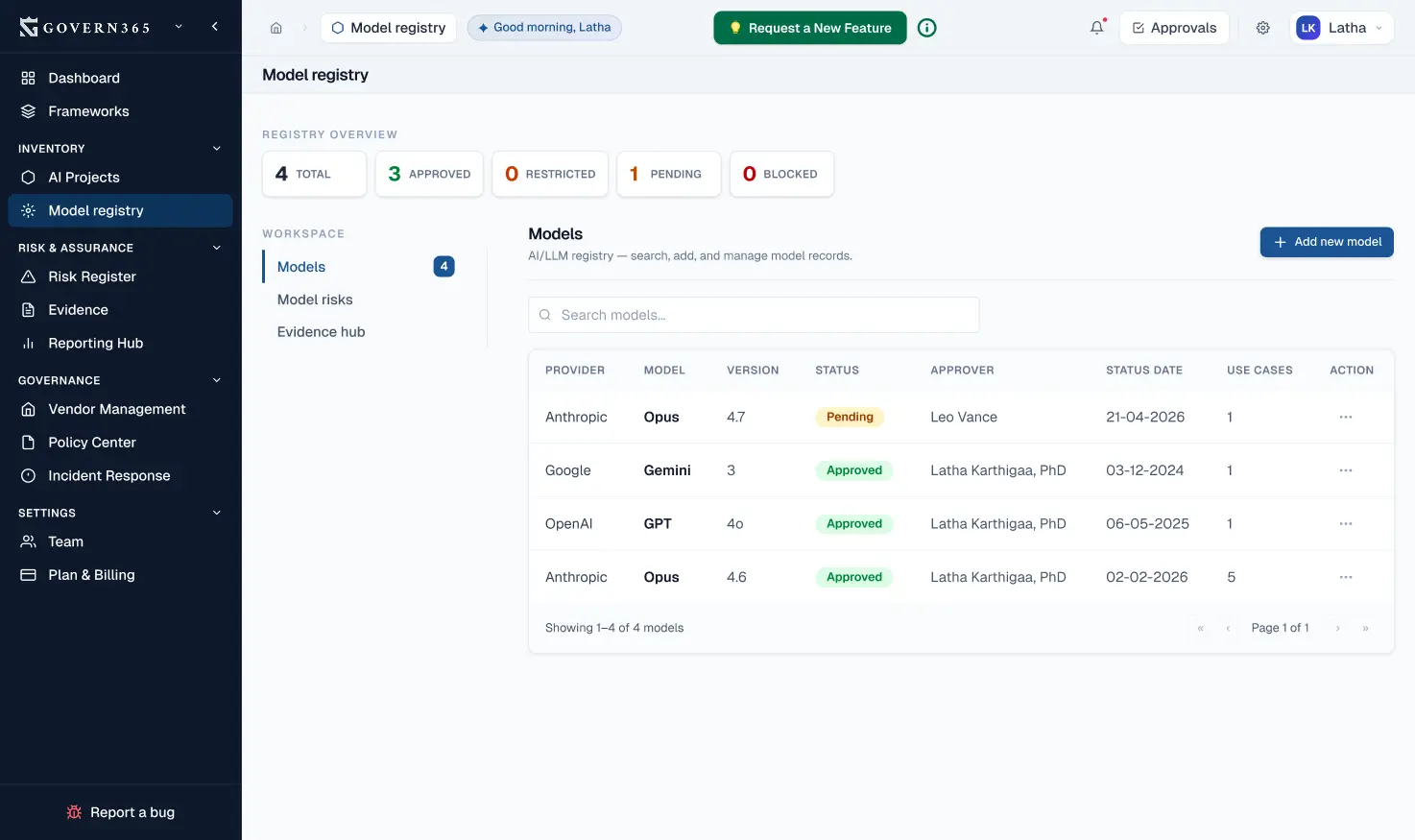

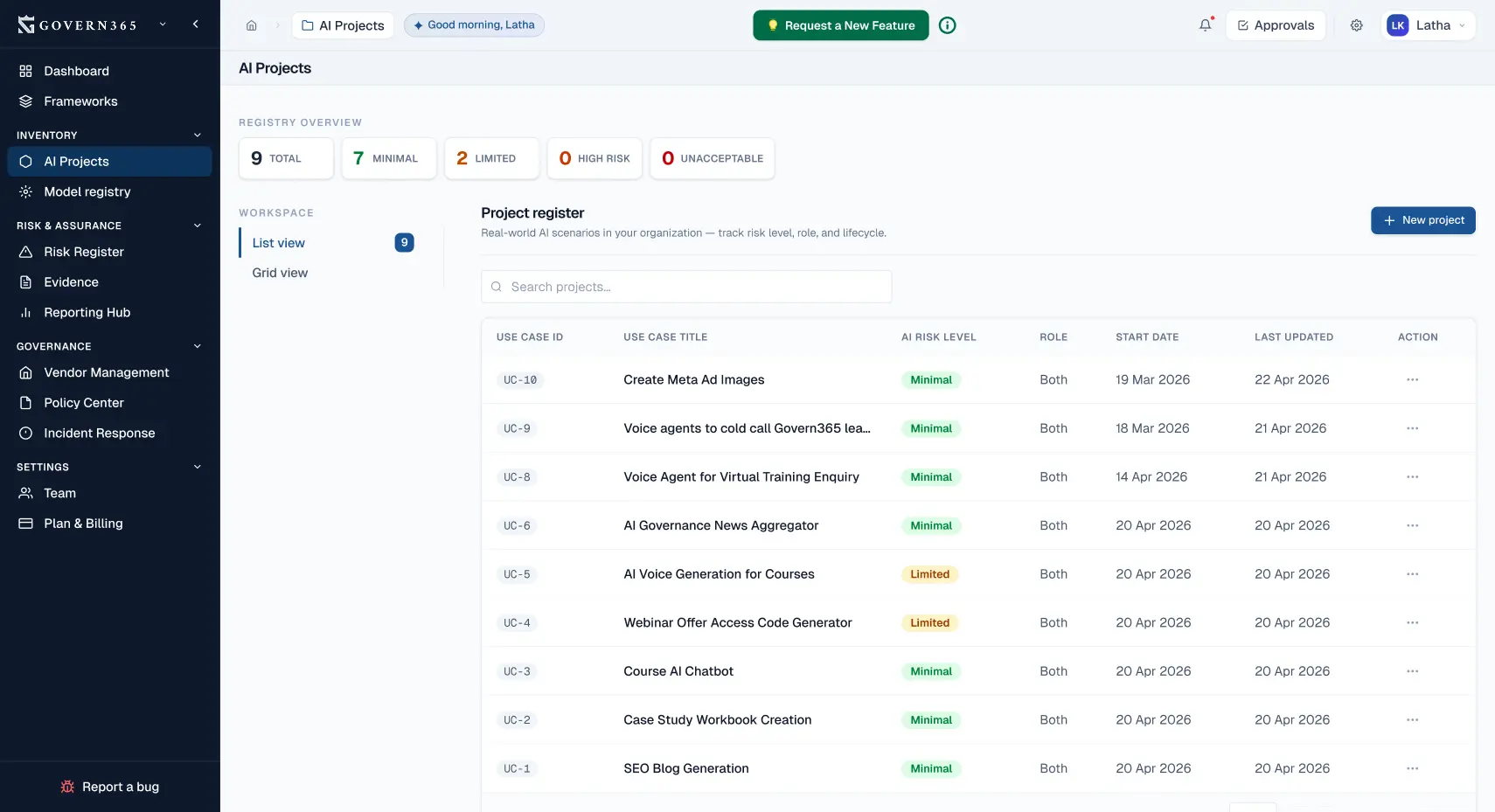

- AI System Classification & Registry

Why it matters: Article 60 of the EU AI Act requires maintaining a database of high-risk AI systems. This is your foundation for proving compliance to regulators.

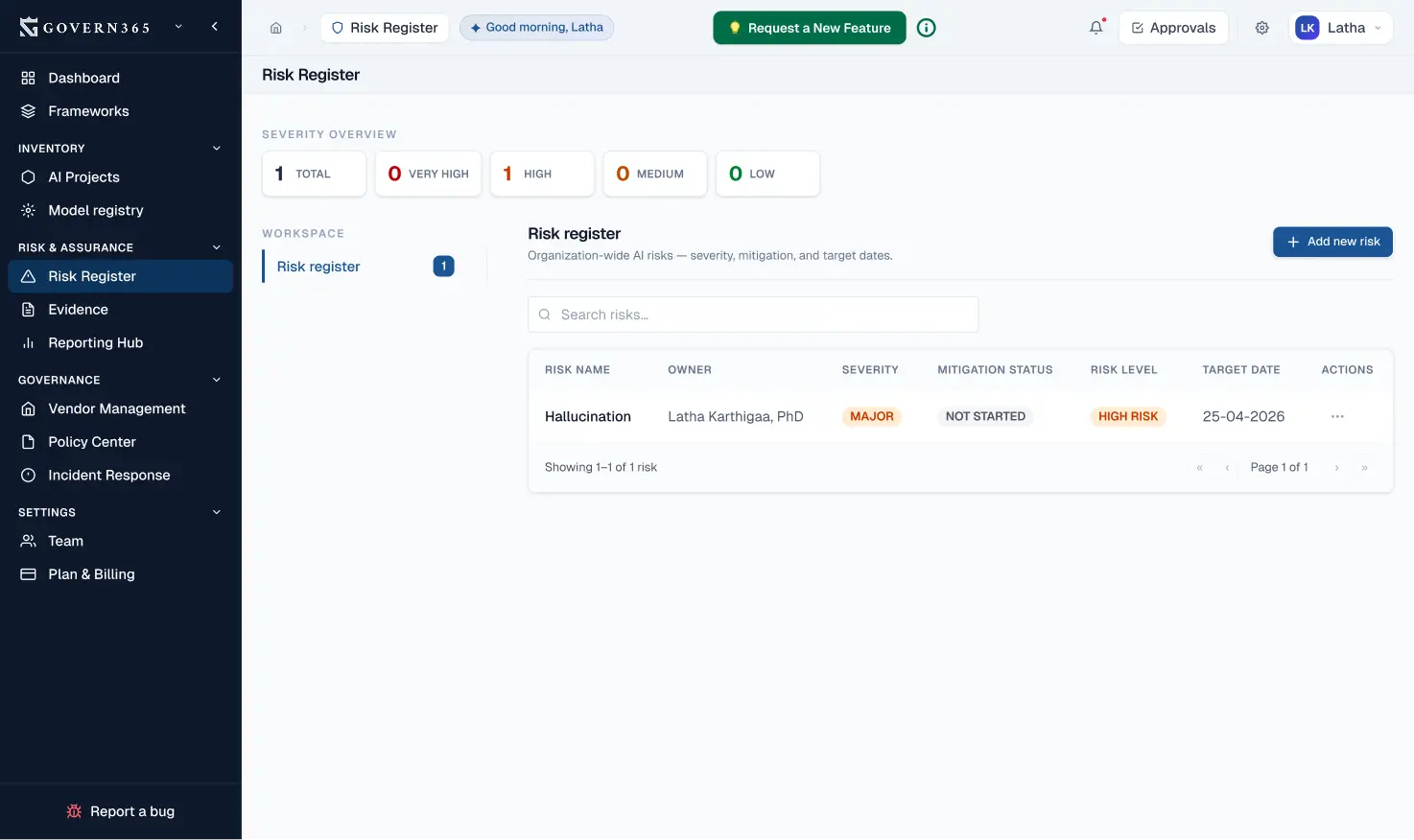

- Risk Assessment Aligned to EU AI Act

Why it matters: Risk assessment documentation is central to high-risk AI compliance. Regulators expect comprehensive risk analysis showing you understand potential harms and have mitigation strategies.

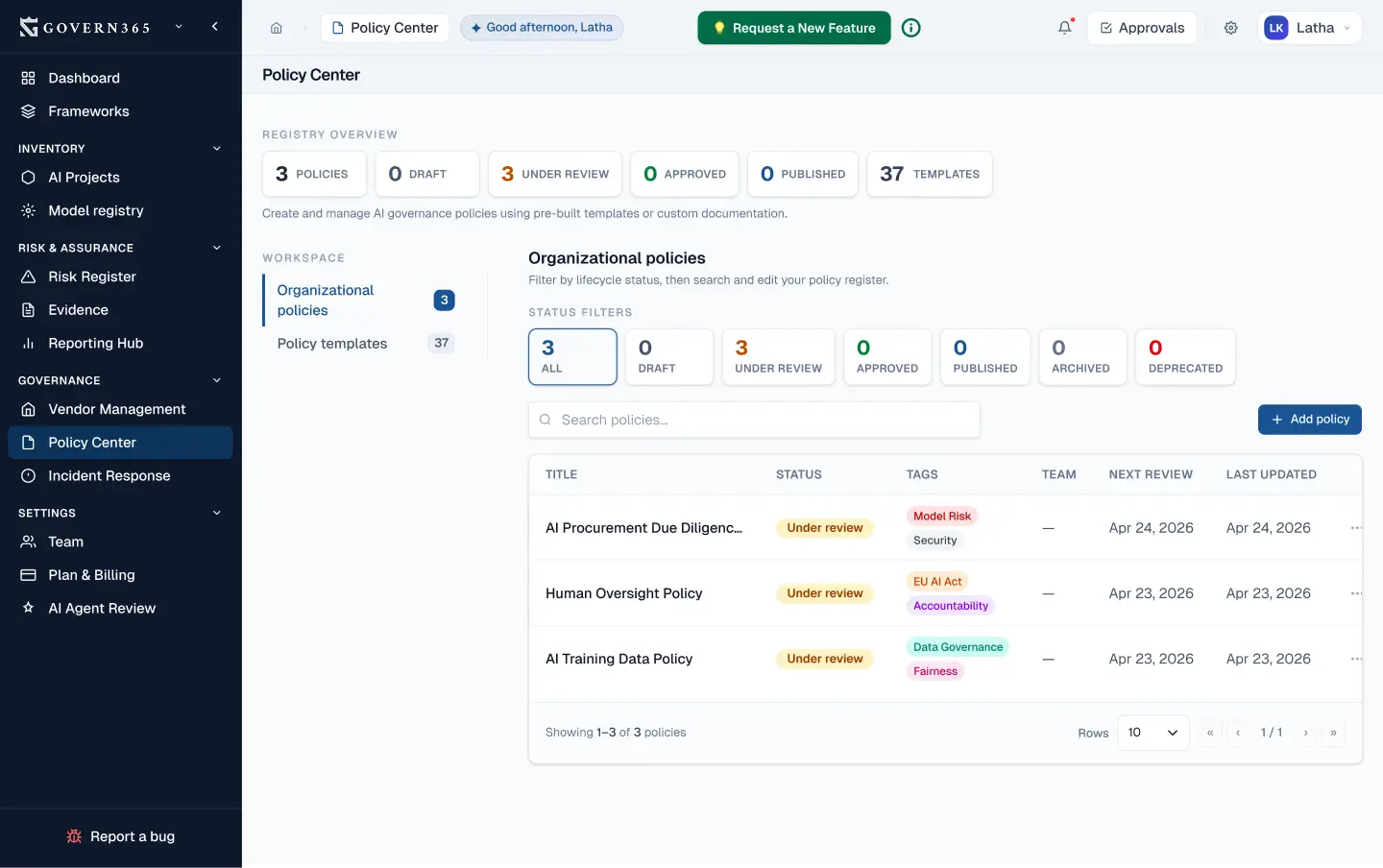

- Pre-Built EU AI Act Policy Templates

Why it matters: Documented policies prove intent and systematic governance. Regulators expect to see formal policies governing AI risk management, incident response, and transparency practices.

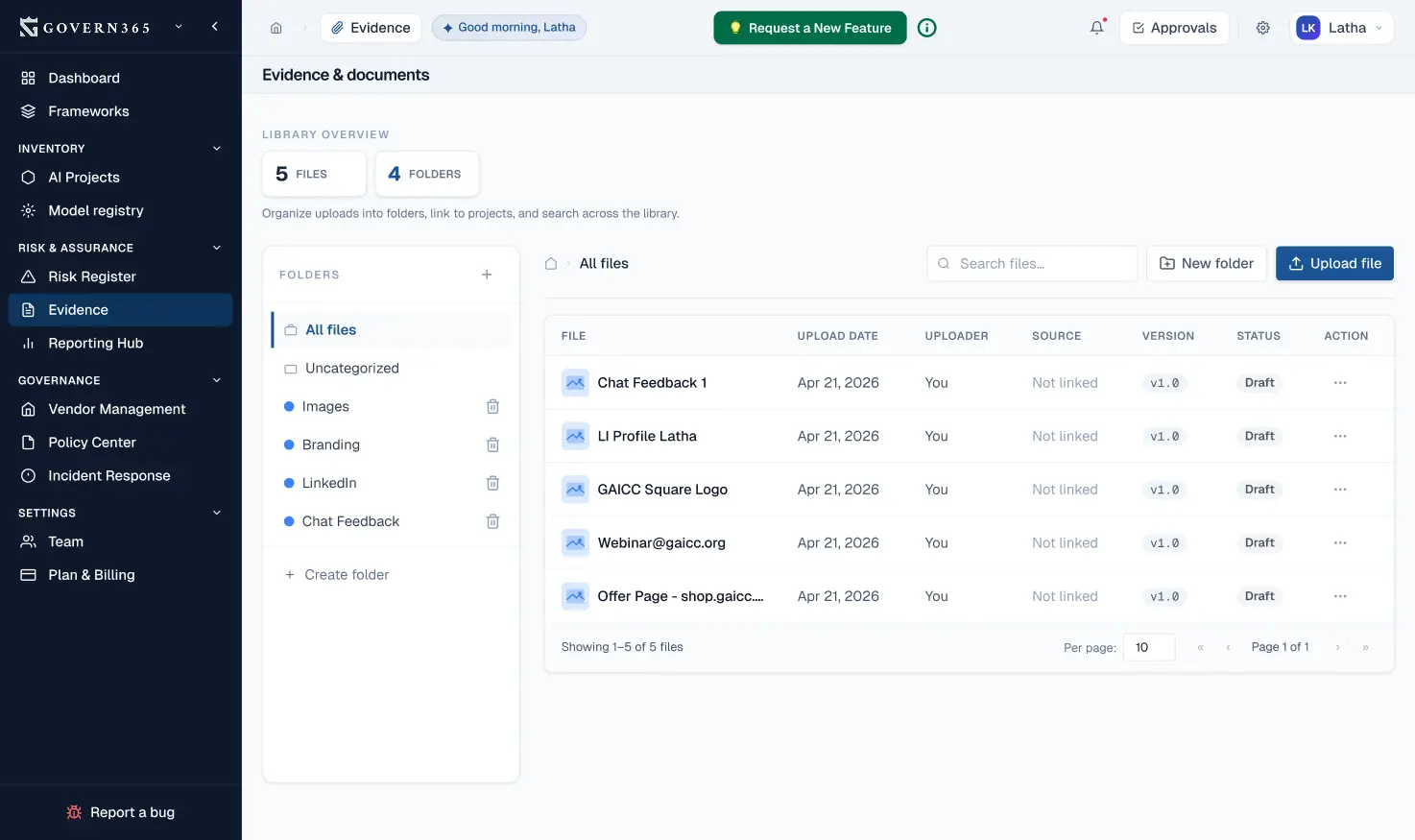

- Conformity Assessment & Evidence Vault

Why it matters: Conformity assessments are mandatory for high-risk systems. You need to demonstrate compliance with quality, data governance, and technical requirements. Regulators will request this documentation.

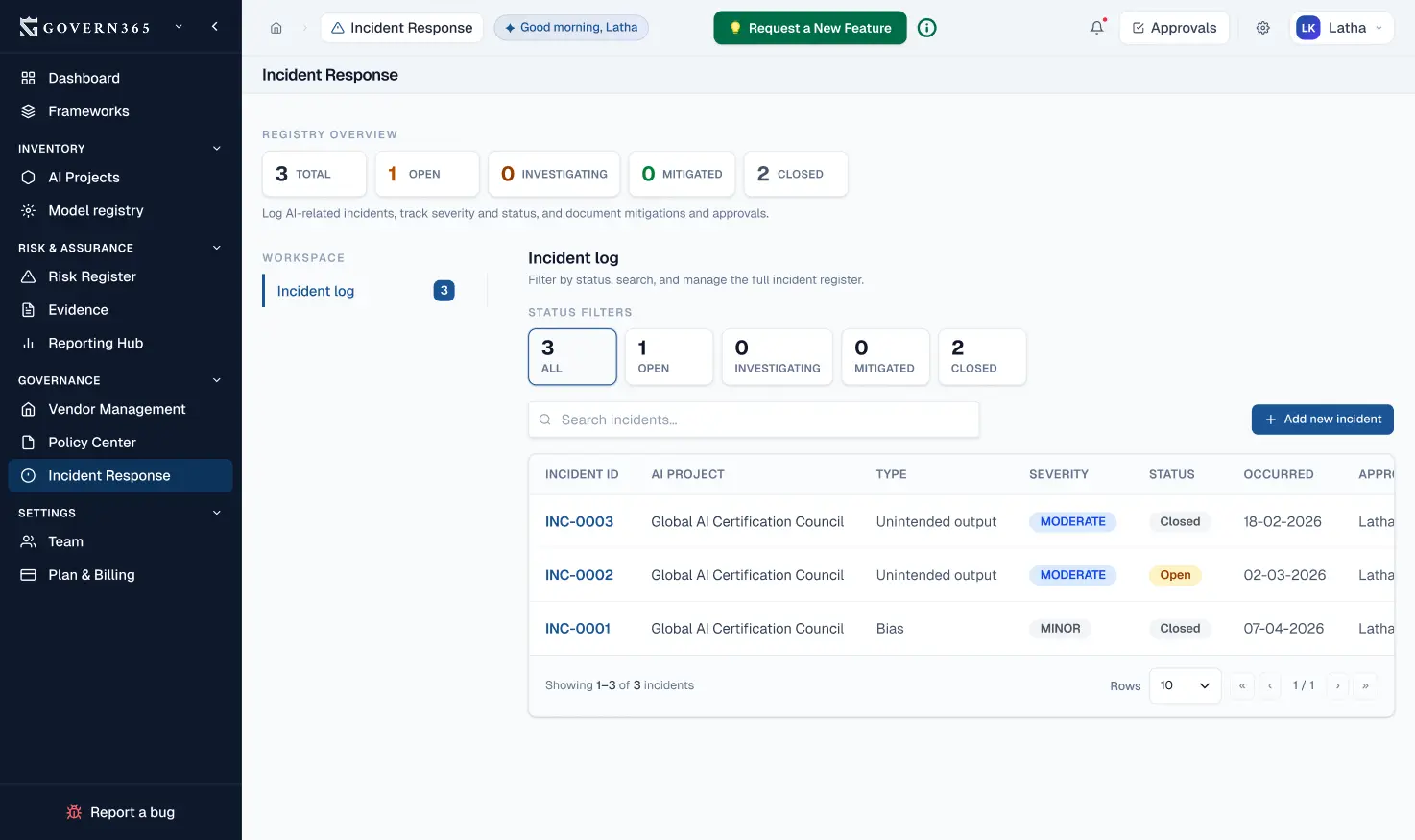

- Incident Reporting & Management

Why it matters: You must be able to quickly identify, log, analyze, and report serious AI incidents. Regulators are empowered to request incident data, and documentation proves you take incident management seriously.

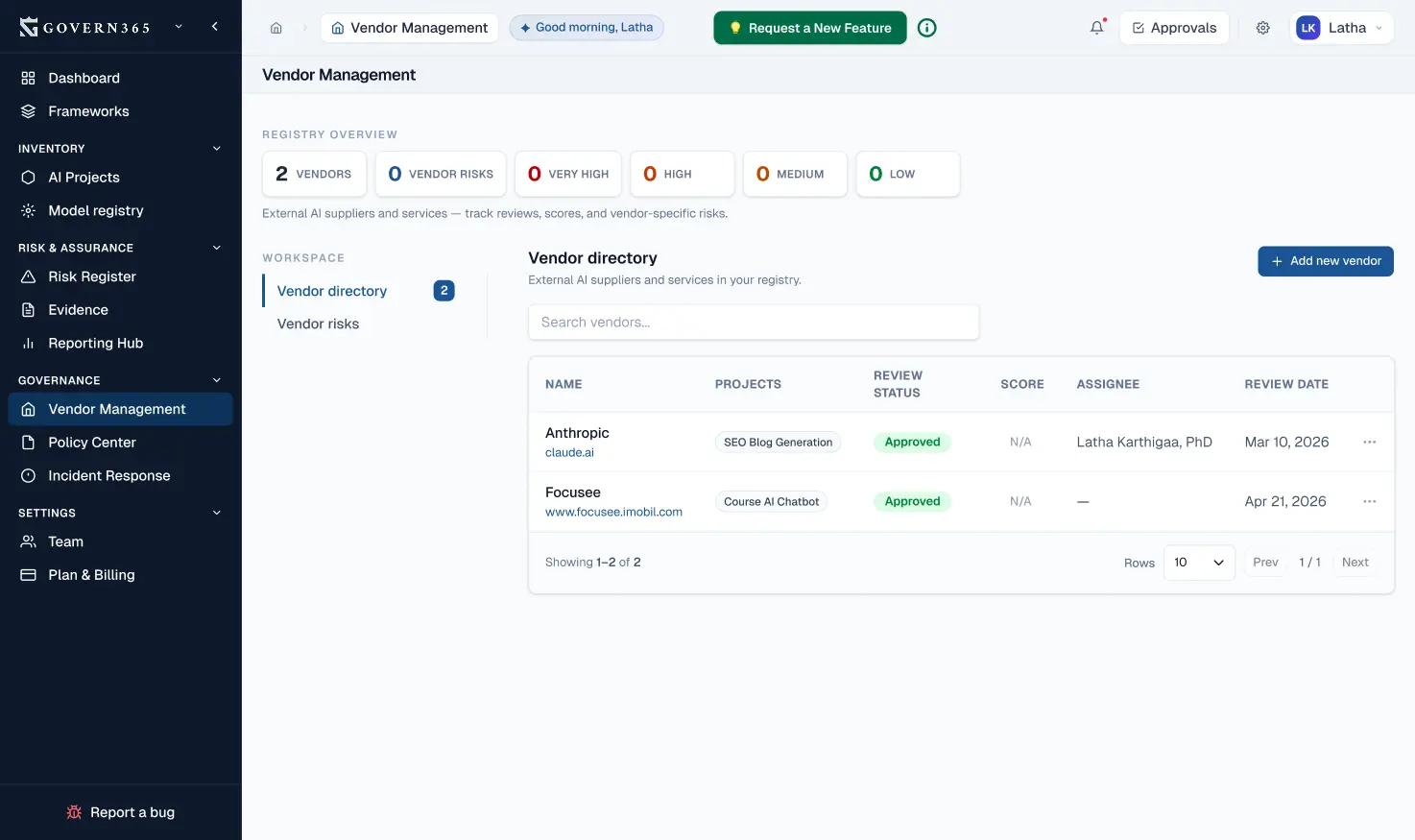

- Vendor & Supply Chain Compliance

Why it matters: You’re responsible for the AI systems you deploy, even if third parties built them. Vendor management and due diligence are critical for supply chain compliance.

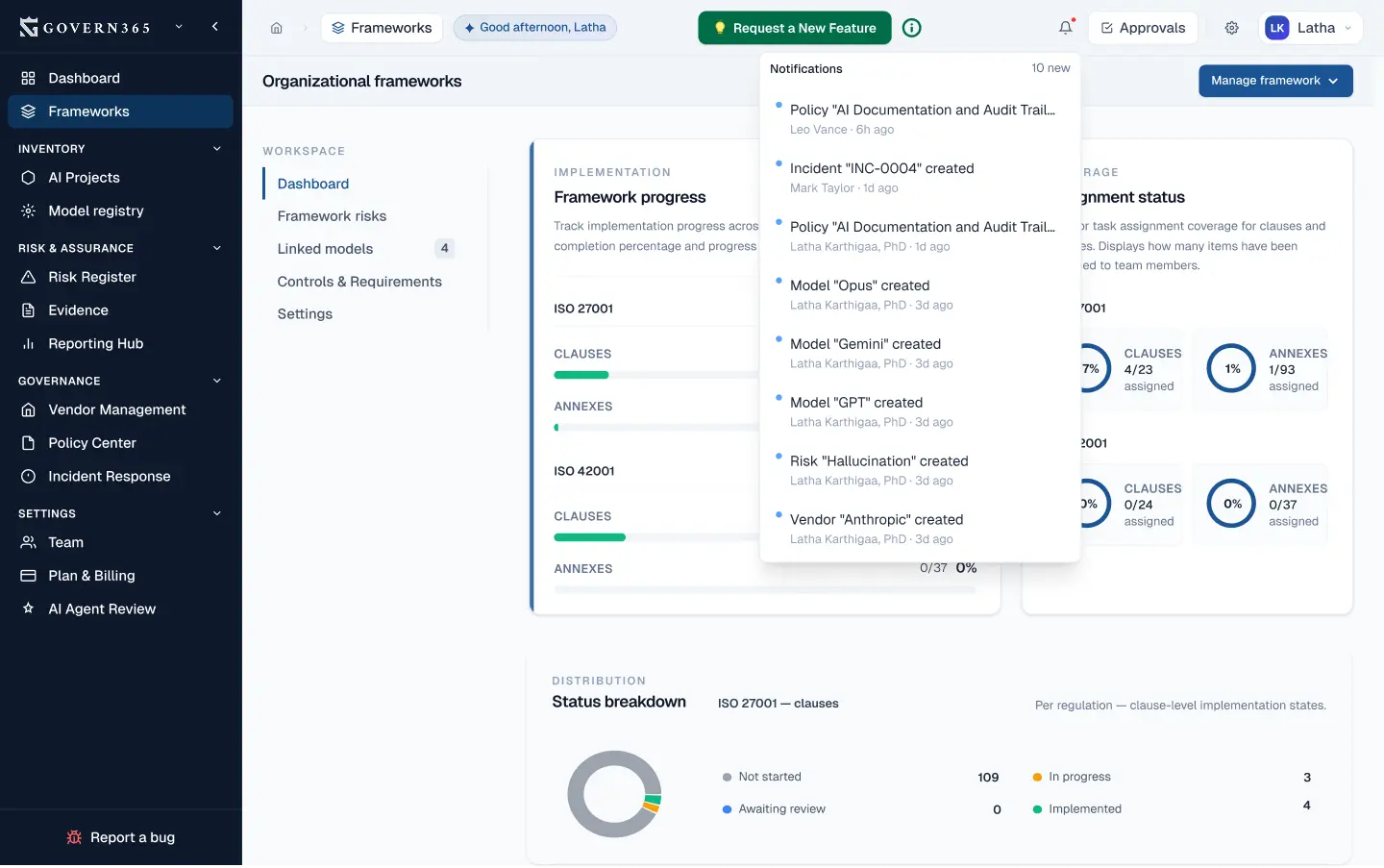

- Continuous Monitoring & Compliance Dashboard

Why it matters: Compliance is not a one-time project. You need ongoing visibility into your compliance status, progress toward deadlines, and areas needing attention.

- Audit-Ready Evidence Generation

Why it matters: When regulators request compliance documentation, you need to respond quickly and comprehensively. Govern365 ensures your evidence is organized, complete, and export-ready.

Your EU AI Act Compliance Checklist

- Inventory and classify all AI systems by risk level (Unacceptable, High, Limited, Minimal)

- Implement a risk management system for high-risk AI systems

- Establish data governance and data quality measures

- Create technical documentation for high-risk systems (design, training, testing, performance)

- Implement transparency measures and user notifications (especially for limited-risk systems)

- Establish human oversight mechanisms for high-risk AI decisions

- Test for accuracy, robustness, and cybersecurity

- Set up incident reporting procedures for serious AI incidents

- Register high-risk AI systems in the EU database (Article 60)

- Conduct conformity assessments for high-risk systems

- Appoint an EU-based authorized representative if your company is outside the EU

- Train staff on AI governance and EU AI Act requirements

- Establish ongoing compliance monitoring and documentation procedures

- Review third-party vendors and ensure supply chain compliance

- Document all compliance efforts for regulator requests

Automate Your EU AI Act Compliance Checklist

Who Needs to Comply with the EU AI Act?

- AI Providers

Definition: Companies that develop, train, or place AI systems on the EU market. You’re directly responsible for ensuring your AI systems comply with the EU AI Act.

Your obligations: Risk classification, conformity assessment, documentation, registration for high-risk systems, transparency measures.

- AI Deployers

Definition: Organizations using AI systems to make decisions or deliver services within the EU. Even if you didn’t build the AI, you have compliance responsibilities.

Your obligations: Human oversight, transparency to users, incident monitoring, vendor compliance due diligence.

- Importers & Distributors

Definition: Companies bringing AI systems from outside the EU into the EU market. You’re liable for ensuring systems meet EU AI Act requirements.

Your obligations: Vendor due diligence, compliance documentation, supply chain oversight.

- Non-EU Companies

Definition: Any company whose AI system outputs are used by people in the EU — regardless of where you’re headquartered. Distance is irrelevant.

Your obligations: Full EU AI Act compliance, designate an EU-based authorized representative.

Key Takeaway:

If your AI affects anyone in the EU, the EU AI Act applies to you — regardless of where your company is incorporated or where your servers are located. This is Brussels extraterritorial regulation with global reach.

Trusted by Compliance Teams Preparing for the EU AI Act

- Sarah Chen

Chief Compliance Officer, Financial Services Firm

- Dr. Marcus Weber

VP of AI Governance, Technology Company

Backed by the Global AI Certification Council (GAICC)

Govern365.ai is developed by GAICC, the leading independent certification and governance body for AI systems. GAICC expertise ensures our platform reflects real-world compliance requirements.

Frequently Asked Questions About EU AI Act Compliance

Get answers to the most common questions about the EU AI Act, compliance requirements, and how Govern365 helps.

What is the EU AI Act?

When does the EU AI Act take effect?

Who does the EU AI Act apply to?

What are the EU AI Act risk categories?

What are the penalties for non-compliance?

What is a high-risk AI system under the EU AI Act?

What is a conformity assessment under the EU AI Act?

Does the EU AI Act apply to companies outside Europe?

How do I classify my AI systems under the EU AI Act?

What documentation is required for EU AI Act compliance?

How does Govern365 help with EU AI Act compliance?

What are GPAI obligations under the EU AI Act?

Get EU AI Act Compliant Before the Deadline

The clock is ticking. August 2, 2026 is only 16 months away for high-risk AI system compliance. Every week of delay increases your risk of enforcement action and penalties. Organizations that move early have a massive advantage: time to assess, document, remediate, and demonstrate compliance.

Govern365 has helped organizations across 47 countries build audit-ready compliance programs. Join them.